research project to

explore the issues related to the compilation of floating-point

programs.

research project to

explore the issues related to the compilation of floating-point

programs.

research project to

explore the issues related to the compilation of floating-point

programs.

research project to

explore the issues related to the compilation of floating-point

programs.Thanks to widespread hardware support, IEEE 754 floating-point computations are increasingly finding their way into embedded C code, and in particular into critical embedded C code.

Floating-point computations are notorious for the traps they lay on the programmer's path. The programmer who uses them should be aware that the result of a floating point operation is (depending on the rounding mode and on the operation itself) only one of the real numbers that can be represented as a floating-point number and that approximates more or less closely the actual result (as computed in the reals). In the worse cases, these approximations may accumulate and compound each other to the point that the final result obtained with floating-point computations is meaningless.

The Hisseo project (that started in October 2008) does not intend to tackle these issues, which are being adressed elsewhere. The Hisseo project will focus on the problems related to the treatment of floating-point computations in the compilation process, especially in the case of the compilation of critical C code.

The compilation of IEEE 754 computations in a C program was not defined univocally until the C99 standard, and as of 2008, many compilers still implement the loose semantics of the previous standard (the previous standard allows reordering floating-point operations as if they were associative, when they aren't. The C99 standard mandates left-to-right ordering). Compiler makers focus on performance, and are not in a hurry to implement a subpart of the standard that removes optimization opportunities.

This situation is unlikely to improve quickly, because the majority of programmers are not concerned by details of this level.Instruction sets of several popular architectures create performance penalties for trying to do the right thing. Processor designers try to get an edge over the competition by introducing ``improved'' floating-point instructions in their architectures. Architectures evolve very slowly (new models of processors come out all the time, but they have to be compatible with previous models). The historical Intel 8087 floating-point coprocessor introduced a 80-bit format that causes ``double rounding'' problems for the float and double C types. The PowerPC introduced a Floating Multiply-Add instruction which does not always produce the same result as a a multiplication followed by an addition.

The processor designers mean well! 80 bits is more precise than 32 or 64. The fmadd instruction introduces less rounding than a multiplication followed by an addition. But, because it is impossible to guess whether the compiler will be able to keep the results in 80-bit registers or use the special instructions, it becomes impossible to predict what the binary will compute looking only at the source codeThe choice of a compiler for critical embedded C code is already quite constrained as it is. It is not always feasible to choose the compiler that implements the C99 version of the standard with respect to floating-point computations, even assuming that one can be found.

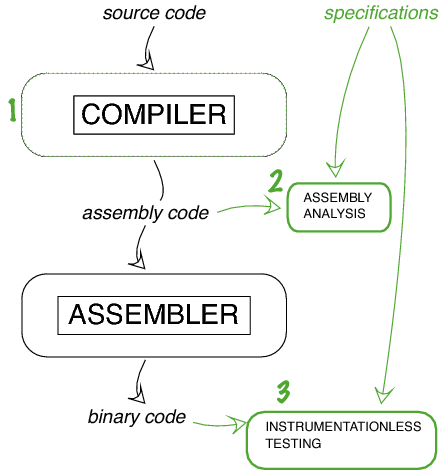

To be acceptable to producers of critical C code, a solution may need to take the reverse-engineering path, and obtain the necessary information from the output of the compilerIn this subtask, formal semantics for floating point computations will be defined and be put to use in the formal verification of a C compiler.

At the assembly level, almost all the sources of non-determinism in the computations have disappeared, but some of the information regarding the structure of the program remains. This is the ideal point to verify by static analysis the translation done by an off-the-shelf C compiler.

A proven approach to the verification of run-time properties is to insert sanity checks within the source code. Unfortunately, in the case of floating-point computations, any modification of the source code may change other, unrelated computations because optimization opportunities may appear or disappear with the modifications. Hence, the tested binary computes differently from the binary finally executed. The tests may fail to find a problem that appears only in the latter.

One way to improve the trustability of tests is to make sure that the tested binary is the same as the finally executed binary.